Mechanical models versus Intuition: A personal journey (Part 3)

When it comes to making critical decisions, especially in professional contexts, the battle often boils down to our gut instincts versus mechanical or evidence-based models. Having journeyed through both terrains, I'd like to share a personal reflection, underlining the importance of relying on mechanical models and the pitfalls of trusting one's intuition.

When Intuition Failed Me

Several years ago, I was in charge of recruiting a new team member for a high-profile project. Despite having a rich pool of candidates with excellent credentials, one particular candidate stood out to me during our conversation. We connected instantly, our discussion was fluid, and I felt they perfectly understood the essence of the project. Their passion was palpable, and my gut told me they were the ideal fit. On paper, they ticked most boxes but fell short in some technical expertise areas. However, my intuition urged me to emphasize our connection and the potential I perceived.

Fast forward a few months, and while their passion was still evident, the gaps in technical expertise became glaring. Deadlines were missed, and often, I found myself mediating between this individual and other team members due to rising tensions. It was evident; I had made a choice based on intuition rather than objective criteria, and the project was suffering for it. This experience was a pivotal lesson for me about the risks of relying solely on intuition.

Embracing Mechanical Models: A Game Changer

Post that experience, I embarked on a journey to better understand and implement mechanical or evidence-based models in my decision-making processes, especially when recruiting. Here's how I went about it:

- Standardized Evaluation Process: I implemented a consistent and standardized process for assessing candidates. This included objective assessments that measured both soft and hard skills, ensuring we had a holistic view of a candidate's capabilities.

- Weighted Scoring: Using the Evidence-Based Selection principles, I ensured that the information about each candidate was weighed using a statistical equation. This mechanical weighing of information ensured consistency across the board.

- Feedback Loop: The beauty of mechanical models is the ability to refine them continuously. By implementing a feedback loop, we could consistently refine our model, ensuring its accuracy and reliability improved over time.

- Separating 'Gut Feel' from the Decision Process: While intuition wasn't completely discarded, it was isolated from the decision-making process. Any 'gut feel' or intuitive insights about a candidate were noted separately and only consulted after the mechanical model had done its work. More often than not, this model-aligned well with intuition, but when they diverged, the model took precedence.

An illustrative example and how to implement this model

All assessment starts with a role description. What do you want to test? Let’s say we want to hire new management consultants that have worked for 5 years. A typical manager level at a management consulting firm. The ability to be a successful consultant would in this case be

- Problem-solving ability (measured with cognitive tests)

- Ambition (measured with interviews and tests)

- Business acumen (measured with interview and case)

- Leadership abilities (measured with interview & screening)

So let’s set up a process to measure that and score that.

The Setup

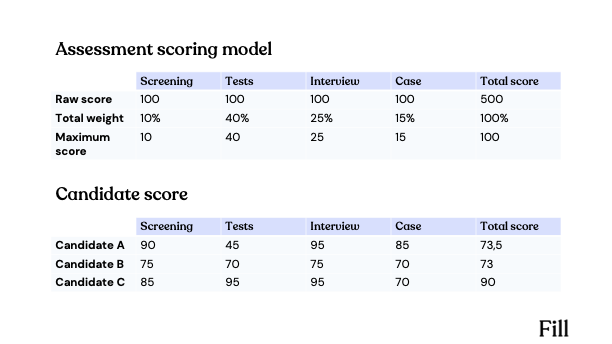

In the rigorous world of hiring for a manager-level role at a management consulting firm, a weighted mechanical model can ensure a balanced evaluation of candidates across multiple competencies. Here's a breakdown of how such a model would evaluate three hypothetical candidates: A, B, and C.

Step 1: Role Description

Position: Manager, Management Consulting

Experience Required: 5 years in management consulting

Key Abilities Required:

- Problem-solving ability

- Ambition

- Business acumen

- Leadership abilities

Step 2: The Assessment Process

1. Screening:

- A preliminary review of the candidate's qualifications and experience.

- Scoring: Each candidate can earn up to 100 points.

2. Test:

- A comprehensive assessment of cognitive abilities, personality traits, and other competencies pertinent to the role.

- Scoring: Up to 100 points based on the breadth and depth of knowledge and capabilities.

3. Interview:

- An in-depth discussion gauging candidates' ambitions, leadership experience, and business insights.

- Scoring: Candidates can achieve a maximum of 100 points based on their articulation and depth of answers.

4. Case:

- A real-world business problem to test candidates' problem-solving and analytical abilities.

- Scoring: Up to 100 points based on the effectiveness and innovation of the solution provided.

Weighted Model:

Step 3: Evaluation and Decision

With the weighted model, Candidate C is the standout choice with a score of 90, followed closely by Candidate A at 73,5, and then Candidate B at 73.

As one can observe, depending on where the candidate's relative strengths lie, the outcome of the model will separate them. A weighted mechanical model ensures that emphasis is placed on areas deemed most critical for the role. In this scenario, the test score heavily influences the overall score. However, it's also crucial to consider intangible factors such as cultural fit and growth potential. Still, for a foundational, systematic evaluation, this model offers a robust framework.

The challenge with mechanical models

Embracing mechanical models, as I've illustrated, can be a transformative step towards more objective and consistent decision-making. However, integrating these models into real-world scenarios isn't without its hurdles. Here, I delve deeper into the complexities and challenges I faced while trying to pivot from intuition-driven judgments to evidence-based models.

- The quest for comprehensive measurement: Mechanical models thrive on data. The more specific and detailed the data, the more accurate the model's predictions. But herein lies a challenge: How does one quantify every facet of an assessment, especially when dealing with human behavior, emotions, or potential? Some elements are inherently difficult to measure, and any attempt to force them into a numerical box can result in a loss of nuance. This sometimes led to an over-reliance on measurable attributes while sidelining essential qualitative insights.

- Adherence to the Process: The rigidity of mechanical models requires strict adherence to the process. Any deviation can compromise the model's reliability. However, in dynamic real-world situations, such strict adherence is often challenging. External pressures, timeline constraints, or unexpected changes can tempt decision-makers to take shortcuts, thus diluting the model's effectiveness.

- Balancing stakeholder expectations: Incorporating mechanical models in team settings often means dealing with multiple stakeholders, each with their perceptions, biases, and reservations. Achieving consensus on the model's parameters, weightage, and process often becomes a delicate dance of negotiation. In many instances, I found that stakeholders needed extensive orientation to understand and trust the model over their intuitions.

- Inter-Assessor Reliability: Even with a mechanical model in place, there can be variations in how different assessors interpret or score certain attributes. This inter-assessor variability can skew results and lead to inconsistencies, thus challenging the model's primary objective of bringing uniformity to the decision-making process.

- Model vs. Gut: The Eternal Dilemma: Perhaps one of the most profound challenges is reconciling moments when the mechanical model's output diverges sharply from one's intuition. In these situations, does one override the gut feeling in favor of the model, or vice versa? Navigating this delicate balance often required a deeper dive into the data, reassessing the model's parameters, or even seeking external validation.

Mitigating the Challenges

While these challenges seem daunting, they're not insurmountable. Over time, I developed strategies to tackle these issues:

- Hybrid Models: Instead of a purely mechanical model, I found that a hybrid approach that allows for some qualitative insights (albeit structured) can capture nuances better.

- Regular Model Refinement: Based on feedback and real-world outcomes, the model should undergo periodic refinements to address gaps or biases.

- Training & Orientation: Spending time educating stakeholders and assessors about the model's value, parameters, and process can reduce friction and ensure better adherence.

- Scenario Testing: Before fully adopting a model, it's beneficial to test it in controlled scenarios to gauge its reliability and identify potential pitfalls.

Real-world Impact

By implementing these mechanical models, not only did our hiring efficiency improve, but we also saw a significant boost in team productivity and cohesion. Project deadlines were met more consistently, and the overall team morale improved as each member felt they were working with colleagues who complimented their skills and expertise.

Moreover, the transparent and objective nature of the mechanical model meant that feedback from unsuccessful candidates was overwhelmingly positive. They appreciated the clarity of the process and often sought feedback on areas of improvement.

Conclusion

The conflict between intuition and evidence-based decision-making is age-old. While intuition, molded by personal experiences and biases, can occasionally guide us right, the consistency and reliability of mechanical models are unmatched. As I've learned through my experiences, in professional contexts, especially when the stakes are high, it's always wiser to trust the model. It might not have the allure of a 'gut feeling', but its results are predictably effective.

Bye for now.

Continued reading:

Part 1: Why hiring is the Everest of business challenges

Part 2: The arbitrage in hiring